Last updated: March 7, 2026

Key Takeaways

- Real-time data integration enables autonomous AI agents to make accurate decisions instantly, while batch processing creates dangerous delays that undermine agent effectiveness

- Over 54% of enterprises now prioritize real-time data exchange over batch processing, marking a fundamental shift in how businesses handle information [1]

- Agentic AI systems require live data feeds to detect anomalies, respond to demand shifts, and optimize operations autonomously without human intervention

- Customer expectations have made real-time responses mandatory: 80% of customers expect immediate interactions, and 73% of B2B buyers demand the same speed [1]

- iPaaS platforms reduce integration cycles by 30% and API processing time by 25%, directly improving business outcomes and customer satisfaction [1]

- Batch syncs are no longer acceptable for enterprise-tier adoption in 2026, with event-driven architectures becoming the baseline requirement [4]

- AI value is constrained by data quality and availability, not model sophistication, making real-time, clean data infrastructure critical [3]

- Over 80% of enterprise SaaS applications will embed AI-driven capabilities as standard features by 2026, all dependent on live data feeds [2]

Quick Answer

Real-time data integration as the hidden multiplier for agentic SaaS workflows: why live data beats batch processing in 2026 comes down to one critical fact—autonomous AI agents cannot make accurate decisions on stale information. When agentic systems rely on batch-processed data updated hourly or daily, they operate on outdated assumptions that lead to inventory errors, missed opportunities, and poor customer experiences. Live data feeds enable agents to detect anomalies as they happen, respond to demand shifts in real-time, and optimize operations continuously without human intervention. With over 54% of enterprises now prioritizing real-time data exchange and 80% of customers expecting immediate responses, batch processing has become a competitive liability that undermines the entire value proposition of agentic AI [1].

What Is Real-Time Data Integration and Why Does It Matter for Agentic Workflows?

Real-time data integration is the continuous, event-driven flow of information between systems with latency measured in seconds or milliseconds, not hours or days. For agentic SaaS workflows—where autonomous AI agents make business decisions without human approval—this immediacy is not optional but foundational.

Agentic AI systems are designed to handle significant portions of business decisions autonomously. By 2026, these systems are projected to automate 15% of work decisions and cut support time by over 50% [2]. But this automation only delivers value when agents operate on current, accurate information.

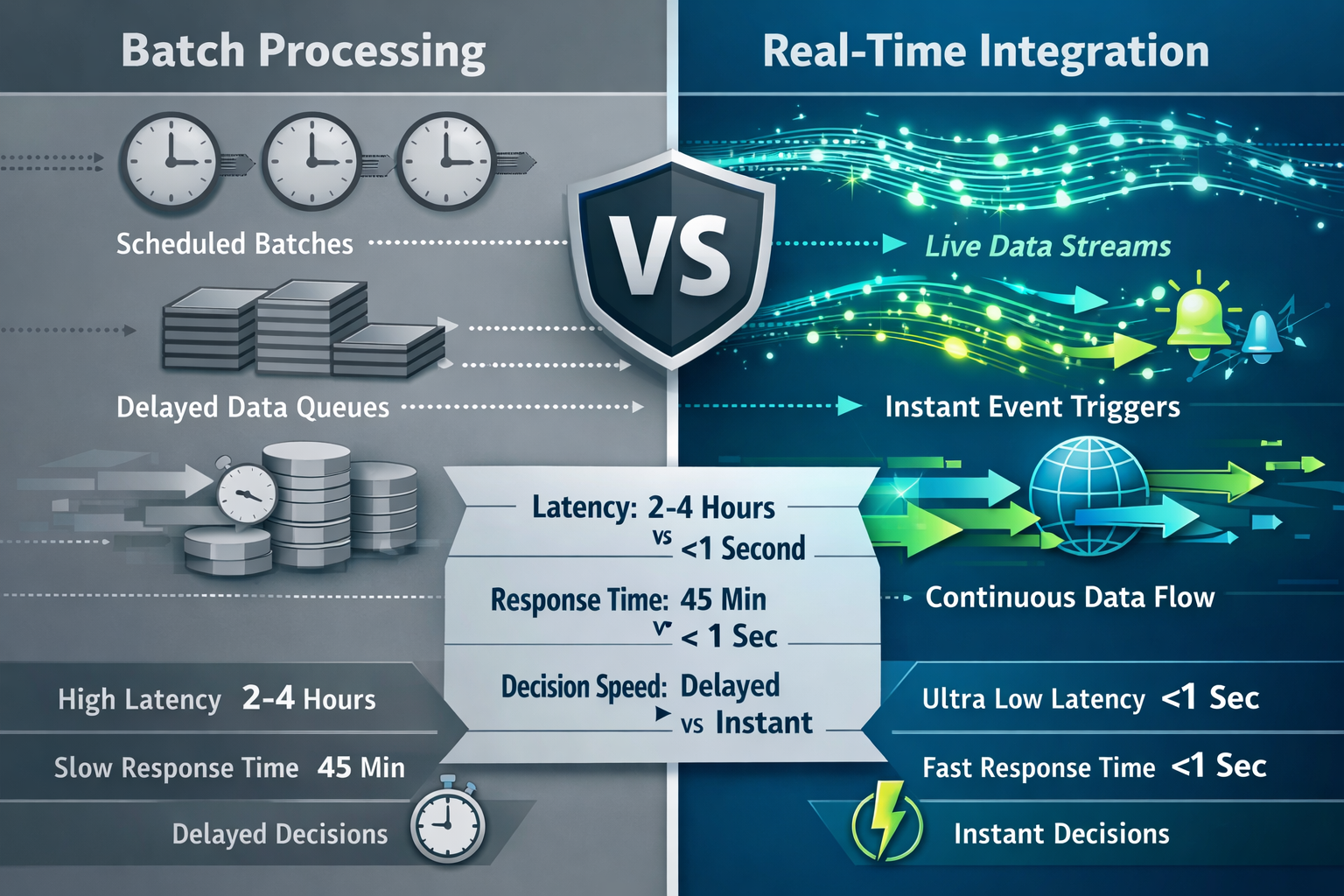

The core difference:

- Batch processing collects data, processes it on a schedule (hourly, daily, weekly), then updates downstream systems

- Real-time integration captures events as they occur and propagates changes immediately across all connected systems

Why this matters for agents:

Autonomous agents need to detect patterns, identify anomalies, and execute responses within operational windows measured in minutes, not days. An agent monitoring cloud infrastructure can't wait for a nightly batch to detect a security breach. A pricing optimization agent can't respond to competitor moves using yesterday's data.

Choose real-time integration if your agentic workflows involve dynamic inventory, customer service automation, fraud detection, demand forecasting, or any scenario where decisions degrade rapidly with data age. Batch processing remains acceptable only for historical analytics, compliance reporting, and scenarios where staleness doesn't impact outcomes.

For more context on how data and analytics power AI-driven marketing decisions, see our complete guide.

How Does Real-Time Data Integration Actually Enable Autonomous Agent Decisions?

Real-time data integration enables autonomous agents by providing three critical capabilities: immediate observability, contextual awareness, and closed-loop feedback.

Immediate observability means agents can monitor business operations as they unfold. When a customer places an order, inventory levels update instantly. When server load spikes, infrastructure agents see the change within seconds. This visibility allows agents to detect anomalies—unusual patterns that signal problems or opportunities—before they cascade into larger issues.

Contextual awareness comes from correlating live data across multiple sources. An agent optimizing marketing spend doesn't just see campaign performance in isolation; it sees real-time conversion rates, inventory availability, competitor pricing, and customer service volume simultaneously. This multi-dimensional view enables sophisticated decisions that batch-processed data cannot support.

Closed-loop feedback allows agents to execute actions and immediately observe results. A dynamic pricing agent adjusts rates, sees demand response within minutes, and refines its strategy accordingly. This rapid iteration is impossible with batch processing, where feedback arrives hours or days later.

Common mistake: Organizations implement agentic AI but feed it batch-processed data, then wonder why agents make poor decisions. The agent isn't failing—it's operating on information that's already obsolete by the time it arrives.

Edge case to consider: Some decisions genuinely don't require real-time data. Strategic planning, long-term trend analysis, and compliance reporting can use batch processing effectively. The key is matching data freshness to decision velocity.

What Are the Specific Business Outcomes When Live Data Powers Agentic SaaS?

Organizations that implement real-time data integration for agentic workflows see measurable improvements across four key areas: customer satisfaction, operational efficiency, revenue optimization, and risk mitigation.

Customer satisfaction improvements:

- 80% of customers expect real-time responses when interacting with brands [1]

- Agents with live data can provide accurate order status, inventory availability, and personalized recommendations instantly

- Support time can be cut by over 50% when agents have immediate access to customer context [2]

Operational efficiency gains:

- iPaaS platforms reduce integration cycles by 30% and decrease API processing time by 25% [1]

- Autonomous agents can optimize resource allocation continuously rather than waiting for batch updates

- Event-driven architectures eliminate the overhead of scheduled sync jobs and reduce infrastructure costs

Revenue optimization:

- Dynamic pricing agents can respond to competitor moves, demand shifts, and inventory levels in real-time

- Marketing optimization agents can reallocate budget to high-performing channels within minutes of detecting performance changes

- Inventory agents can prevent stockouts and overstock situations by reacting to actual demand patterns as they emerge

Risk mitigation:

- Fraud detection agents can block suspicious transactions before they complete

- Security agents can respond to threats within seconds of detection

- Compliance agents can flag violations immediately rather than discovering them in post-hoc audits

Decision rule: Choose real-time integration when the cost of delayed decisions exceeds the cost of implementing event-driven infrastructure. For most customer-facing operations in 2026, this threshold has already been crossed.

Why Is Batch Processing Becoming a Competitive Liability in 2026?

Batch processing is becoming a competitive liability because enterprise buyers, consumers, and AI systems all now expect immediacy as the baseline standard. What was acceptable in 2020 is disqualifying in 2026.

Enterprise adoption has shifted decisively: Over 54% of enterprises now prioritize real-time data exchange over batch processing [1]. This isn't a marginal preference—it's a fundamental change in how businesses evaluate technology partners. Batch syncs are no longer acceptable for tier-1 enterprises [4].

Customer expectations have evolved: 73% of B2B buyers expect the same immediacy they experience as consumers [1]. When buyers interact with consumer platforms that provide instant order tracking, real-time inventory visibility, and immediate support responses, they expect the same from enterprise vendors. Batch-processed data creates visible delays that signal technical debt and operational inefficiency.

AI systems cannot compensate for stale data: Agentic AI is projected to handle 15% of work decisions autonomously by 2026 [2]. These agents make decisions based on patterns in the data they receive. When that data is hours or days old, patterns are already obsolete. No amount of model sophistication can overcome fundamentally outdated inputs.

Infrastructure modernization is now the competitive advantage: The fastest-moving companies are prioritizing infrastructure rebuilds alongside feature development [3]. Event-driven architectures, API normalization, and modular refactors from monolithic systems have become critical competitive factors.

The compounding effect: Organizations using batch processing don't just lag in one area—they accumulate disadvantages across customer experience, operational efficiency, and AI effectiveness simultaneously. This creates a widening gap between leaders and laggards that becomes harder to close over time.

Common mistake: Treating real-time integration as a "nice to have" feature rather than foundational infrastructure. By the time competitive pressure forces adoption, the technical debt and organizational change required make migration significantly more difficult.

What Infrastructure Components Enable Real-Time Data Integration for Agents?

Real-time data integration for agentic workflows requires four core infrastructure components: event-driven architecture, API-first design, integration platforms, and data quality pipelines.

Event-driven architecture (EDA) replaces scheduled batch jobs with immediate event propagation. When a business event occurs—customer purchase, inventory change, service request—the system publishes an event that triggers downstream processing instantly. EDA components include:

- Message queues and event streams (Kafka, RabbitMQ, AWS EventBridge)

- Event schemas and versioning for consistent data structure

- Pub/sub patterns that decouple event producers from consumers

- Webhooks for real-time notifications to external systems

API-first design makes integration a first-class product feature rather than an afterthought. Modern SaaS platforms expose:

- RESTful APIs with comprehensive endpoint coverage

- GraphQL interfaces for flexible data queries

- WebSocket connections for bidirectional real-time communication

- API versioning and deprecation policies for stable integrations

Integration platforms orchestrate connections between systems without custom code for each integration. Over 62% of enterprises are adopting cloud-based integration frameworks [1]. Key platform types:

- iPaaS (Integration Platform as a Service) provides pre-built connectors, transformation logic, and monitoring

- API gateways normalize authentication, rate limiting, and routing across multiple APIs

- Data orchestration tools coordinate complex workflows across multiple systems

Data quality pipelines ensure that real-time data is actually usable. AI value is constrained by data quality and availability, not model sophistication [3]. Quality components include:

- Schema validation to reject malformed data at ingestion

- Deduplication to prevent duplicate events from creating inconsistent state

- Enrichment to add context from reference data sources

- Versioning to track data lineage and enable rollback

Choose iPaaS if: You need to connect multiple SaaS applications quickly without building custom integrations. iPaaS reduces integration cycles by 30% [1].

Build custom event-driven architecture if: You have unique business logic, high-volume data flows, or specific latency requirements that generic platforms cannot meet.

For organizations building AI-driven workflows, understanding how AI integrates with marketing systems provides valuable context for integration strategy.

How Do You Transition From Batch Processing to Real-Time Integration Without Disrupting Operations?

Transitioning from batch processing to real-time integration requires a phased approach that minimizes risk while building organizational capability. The migration follows five stages: assessment, pilot, parallel operation, cutover, and optimization.

Stage 1: Assessment (2-4 weeks)

- Inventory existing batch processes and their schedules

- Identify which workflows genuinely require real-time data versus those that can remain batch

- Map data dependencies and integration points

- Establish baseline metrics for latency, accuracy, and operational cost

- Decision rule: Prioritize customer-facing workflows and agentic AI systems for real-time migration; keep back-office analytics on batch processing

Stage 2: Pilot (4-8 weeks)

- Select one high-value, low-complexity workflow for initial real-time implementation

- Choose workflows where business impact is measurable and stakeholder buy-in is strong

- Implement event-driven architecture for the pilot workflow

- Establish monitoring and alerting for the new real-time pipeline

- Common mistake: Choosing overly complex workflows for the pilot, which delays results and reduces confidence

Stage 3: Parallel Operation (4-12 weeks)

- Run batch and real-time systems simultaneously

- Compare outputs to validate data consistency and accuracy

- Tune event processing logic based on real-world data patterns

- Train operations teams on new monitoring and troubleshooting procedures

- Edge case: Some data transformations that work in batch (like complex aggregations) may need redesign for streaming contexts

Stage 4: Cutover (1-2 weeks per workflow)

- Redirect production traffic to real-time pipeline

- Maintain batch system as fallback for initial cutover period

- Monitor closely for anomalies or performance issues

- Document lessons learned and update migration playbook

- Decision rule: Only proceed to cutover when parallel operation shows consistent accuracy for at least two weeks

Stage 5: Optimization (ongoing)

- Reduce latency through architecture refinements

- Implement cost optimizations based on actual usage patterns

- Expand real-time integration to additional workflows

- Build organizational muscle for event-driven thinking

Quick checklist for successful migration:

- Secure executive sponsorship and adequate budget

- Establish clear success metrics before starting

- Invest in monitoring and observability infrastructure early

- Train teams on event-driven concepts and troubleshooting

- Plan for data quality issues that batch processing may have masked

- Document event schemas and maintain version control

- Build rollback procedures before cutover

Organizations looking to understand broader digital marketing strategy can apply similar phased approaches to technology adoption.

What Are the Hidden Costs and Common Pitfalls of Real-Time Integration?

Real-time data integration delivers significant value, but it also introduces costs and complexity that organizations must anticipate and manage. The hidden costs fall into four categories: infrastructure, operational overhead, data quality debt, and organizational change.

Infrastructure costs:

- Event streaming platforms (Kafka, Kinesis) require dedicated infrastructure and expertise

- API call volumes increase dramatically compared to batch processing, potentially triggering usage-based pricing tiers

- Monitoring and observability tools become essential rather than optional

- Estimate: Infrastructure costs typically increase 20-40% initially, then decline as efficiency improvements offset volume growth

Operational overhead:

- 24/7 monitoring becomes necessary because real-time systems can't wait until morning for issue resolution

- On-call rotations and incident response procedures add staffing requirements

- Troubleshooting distributed event-driven systems requires different skills than debugging batch jobs

- Common mistake: Underestimating the operational maturity required to run real-time systems reliably

Data quality debt:

- Batch processing often masks data quality issues through error handling and retry logic

- Real-time systems expose these issues immediately, requiring upstream fixes

- Schema evolution becomes more complex when changes must propagate instantly

- Edge case: Some data sources are inherently unreliable and may require buffering or quality gates even in real-time architectures

Organizational change:

- Teams accustomed to batch processing schedules must adapt to continuous operation

- Business processes that assumed data latency may need redesign

- Cross-functional coordination increases as systems become more tightly coupled

- Decision rule: Budget 30-40% of project effort for organizational change management, not just technology implementation

Common pitfalls to avoid:

- Premature optimization: Implementing real-time integration for workflows that don't require it

- Insufficient monitoring: Deploying real-time systems without comprehensive observability

- Ignoring data quality: Assuming that faster data delivery will compensate for accuracy issues

- Underestimating complexity: Treating event-driven architecture as a simple technology swap rather than a fundamental design change

- Lack of governance: Allowing event schemas to proliferate without versioning and documentation standards

When to reconsider real-time integration:

- Decision latency requirements are measured in hours or days, not minutes

- Data sources update infrequently (weekly, monthly)

- Organizational maturity for 24/7 operations is insufficient

- Budget constraints make infrastructure investment prohibitive

The key is matching integration strategy to actual business requirements rather than adopting real-time processing as a default.

How Will Real-Time Data Integration Evolve as Agentic AI Becomes More Sophisticated?

Real-time data integration will evolve in three directions as agentic AI becomes more sophisticated: deeper automation, predictive data provisioning, and self-healing integration infrastructure.

Deeper automation means agents will not just consume real-time data but actively manage integration workflows. By 2026, over 80% of enterprise SaaS applications will embed AI-driven capabilities as standard features [2]. These embedded agents will:

- Automatically discover and map data sources

- Generate integration logic based on business intent rather than technical specifications

- Optimize data flow routing based on cost, latency, and reliability constraints

- Detect and resolve integration failures without human intervention

Predictive data provisioning shifts from reactive to proactive data delivery. Instead of waiting for agents to request data, integration platforms will anticipate needs based on patterns:

- Pre-fetch data that agents are likely to need based on current context

- Cache frequently accessed data at the edge for ultra-low latency

- Adjust data refresh rates dynamically based on decision velocity

- Example: A pricing agent preparing to adjust rates would automatically receive competitor pricing, inventory levels, and demand forecasts without explicit requests

Self-healing integration infrastructure will reduce operational overhead through autonomous problem resolution:

- Automatic failover when data sources become unavailable

- Dynamic schema adaptation when upstream systems change

- Intelligent retry and backoff strategies that learn from failure patterns

- Anomaly detection that identifies data quality issues before they impact decisions

Market growth indicators:

- The global AI-as-a-Service market is projected to reach $5.6 billion by 2030 with a 37.1% compound annual growth rate [2]

- Nearly 75% of organizations plan to increase investment in AI-based software over the next year [2]

- API-first architecture and open APIs are becoming first-class product features rather than afterthoughts [5]

Emerging patterns to watch:

- Data mesh architectures that treat data as a product with domain-specific ownership

- Federated learning that enables AI training on distributed real-time data without centralization

- Edge computing integration that processes data closer to sources for ultra-low latency

- Blockchain-based event logs for tamper-proof audit trails in regulated industries

Decision rule for 2026: Invest in integration platforms that support AI-driven automation and can evolve as agent capabilities advance. Avoid platforms that treat integration as purely a technical plumbing problem without consideration for how agents will consume and act on data.

Organizations exploring how to succeed in affiliate marketing will find similar patterns: success comes from infrastructure that enables automation, not just manual optimization.

What Should Organizations Prioritize When Building Real-Time Integration for Agentic Workflows?

Organizations building real-time integration for agentic workflows should prioritize three foundational elements: data quality infrastructure, event-driven architecture, and observability before adding features or expanding scope.

Data quality infrastructure comes first because AI value is constrained by data quality and availability, not model sophistication [3]. Prioritize:

- Schema validation at ingestion to reject malformed data immediately

- Data contracts that specify quality guarantees between systems

- Automated testing for data pipelines to catch regressions

- Lineage tracking to understand data provenance and transformation history

- Quality metrics that measure completeness, accuracy, timeliness, and consistency

Event-driven architecture provides the foundation for real-time data flow. Focus on:

- Event schema design that balances flexibility and stability

- Versioning strategy that allows schema evolution without breaking consumers

- Idempotency so that duplicate events don't create inconsistent state

- Ordering guarantees when sequence matters for business logic

- Partitioning strategy to scale event processing horizontally

Observability enables operational confidence in real-time systems. Implement:

- End-to-end tracing to follow events through distributed systems

- Real-time dashboards showing data flow, latency, and error rates

- Alerting on anomalies, failures, and performance degradation

- Logging that captures sufficient context for troubleshooting

- Capacity planning based on actual usage patterns and growth projections

Practical prioritization framework:

| Priority Level | Focus Area | Timeline | Success Metric |

|---|---|---|---|

| Critical (Month 1-3) | Data quality gates, basic event streaming, core monitoring | 90 days | Zero data quality incidents reaching agents |

| High (Month 4-6) | Schema versioning, advanced observability, pilot agentic workflow | 180 days | One production agentic workflow on real-time data |

| Medium (Month 7-12) | Self-service integration, automated testing, expanded agent coverage | 365 days | 50% of target workflows migrated to real-time |

| Low (Year 2+) | Predictive provisioning, self-healing infrastructure, advanced optimization | Ongoing | Autonomous operation with minimal manual intervention |

Common mistake: Organizations rush to implement sophisticated AI agents before establishing reliable real-time data infrastructure, then struggle with agent accuracy and reliability issues that stem from data problems, not model deficiencies.

Choose real-time integration if:

- Customer-facing workflows require immediate responses

- Business decisions degrade rapidly with data age

- Competitive differentiation depends on operational speed

- Agentic AI systems are core to business strategy

Stick with batch processing if:

- Decision latency requirements exceed one hour

- Data sources update infrequently

- Operational maturity for 24/7 systems is insufficient

- Budget constraints make infrastructure investment prohibitive

For organizations building comprehensive SEO strategies, the same principle applies: foundational infrastructure enables advanced capabilities.

Conclusion

Real-time data integration as the hidden multiplier for agentic SaaS workflows: why live data beats batch processing in 2026 is not a theoretical advantage but a practical necessity driven by customer expectations, enterprise requirements, and the fundamental needs of autonomous AI systems. With over 54% of enterprises prioritizing real-time data exchange and 80% of customers expecting immediate responses, batch processing has shifted from standard practice to competitive liability [1].

The core insight is straightforward: agentic AI systems cannot make accurate decisions on stale information. When autonomous agents detect anomalies, respond to demand shifts, and optimize operations continuously, they require live data feeds that reflect current reality, not yesterday's snapshot. Organizations that implement real-time integration see measurable improvements in customer satisfaction, operational efficiency, revenue optimization, and risk mitigation.

Actionable next steps:

- Assess current state: Inventory existing batch processes and identify which workflows genuinely require real-time data versus those that can remain batch-processed

- Establish baseline metrics: Measure current latency, accuracy, and operational costs to quantify improvement opportunities

- Prioritize data quality: Implement schema validation, data contracts, and quality metrics before expanding real-time integration scope

- Start with a pilot: Choose one high-value, low-complexity workflow for initial real-time implementation to build organizational capability

- Invest in observability: Deploy monitoring, alerting, and tracing infrastructure to enable confident operation of real-time systems

- Plan for organizational change: Budget adequate time and resources for training, process redesign, and change management

The transition from batch to real-time integration requires investment in infrastructure, operational maturity, and organizational adaptation. But for organizations deploying agentic AI workflows in 2026, this investment is not optional—it's the foundation that determines whether autonomous agents deliver value or create new problems. The question is not whether to adopt real-time integration, but how quickly organizations can build the capability before competitive pressure makes the gap too wide to close.

FAQ

What is the main difference between real-time data integration and batch processing?

Real-time integration propagates data changes immediately with latency measured in seconds, while batch processing collects data and updates systems on a schedule (hourly, daily, weekly). Real-time enables immediate decision-making; batch introduces delays that can range from minutes to days.

Why do agentic AI systems specifically require real-time data?

Agentic AI systems make autonomous decisions without human approval. These decisions are only accurate when based on current information. Stale data from batch processing causes agents to operate on outdated assumptions, leading to inventory errors, missed opportunities, and poor customer experiences.

How much does real-time integration infrastructure typically cost compared to batch processing?

Infrastructure costs typically increase 20-40% initially when transitioning to real-time integration, primarily from event streaming platforms, increased API calls, and monitoring tools. Costs often decline over time as efficiency improvements offset volume growth.

Can some workflows remain on batch processing while others use real-time integration?

Yes, and this is often the optimal approach. Strategic planning, long-term trend analysis, and compliance reporting can remain on batch processing. Prioritize real-time integration for customer-facing workflows, agentic AI systems, and any decisions that degrade rapidly with data age.

What is the biggest mistake organizations make when implementing real-time integration?

The biggest mistake is implementing sophisticated AI agents before establishing reliable real-time data infrastructure. Organizations then struggle with agent accuracy issues that stem from data quality and latency problems, not model deficiencies.

How long does it typically take to transition from batch to real-time integration?

A phased transition typically takes 6-12 months for initial production deployment, starting with assessment (2-4 weeks), pilot (4-8 weeks), parallel operation (4-12 weeks), and cutover (1-2 weeks per workflow). Full migration of all target workflows may take 18-24 months.

What skills do teams need to operate real-time integration systems?

Teams need expertise in event-driven architecture, distributed systems troubleshooting, API design, data quality management, and 24/7 operational practices. These differ significantly from batch processing skills, requiring training and potentially new hires.

Is real-time integration necessary for all SaaS applications in 2026?

No, but it has become the baseline expectation for enterprise-tier applications and any system supporting autonomous AI agents. Over 54% of enterprises now prioritize real-time data exchange, and batch syncs are no longer acceptable for tier-1 enterprise buyers [1][4].

What is iPaaS and how does it help with real-time integration?

Integration Platform as a Service (iPaaS) provides pre-built connectors, transformation logic, and monitoring that orchestrate connections between systems without custom code. iPaaS reduces integration cycles by 30% and API processing time by 25% [1].

How do you measure the success of real-time integration implementation?

Key metrics include data latency (time from event to availability), decision accuracy (correctness of agent actions), customer satisfaction scores, operational efficiency gains, and revenue impact. Baseline these metrics before migration to quantify improvement.

What happens if real-time data feeds fail or become unavailable?

Robust real-time systems include fallback mechanisms: cached data for temporary outages, automatic failover to backup sources, graceful degradation to batch processing when necessary, and clear alerting to operations teams. Self-healing infrastructure is an emerging capability.

Can small organizations afford real-time integration infrastructure?

Cloud-based iPaaS platforms and managed event streaming services have reduced the barrier to entry significantly. Small organizations can start with managed services that charge based on usage, avoiding large upfront infrastructure investments while gaining real-time capabilities.

References

[1] Enterprise Integration Statistics Trends You Need To Know In 2026 – https://www.appseconnect.com/post_articles/enterprise-integration-statistics-trends-you-need-to-know-in-2026/

[2] Top 10 Saas Trends To Watch In 2026 – https://www.techugo.com/blog/top-10-saas-trends-to-watch-in-2026/

[3] Saas 2026 Trends From Ai Experiments To Production Ready Platforms – https://ardas-it.com/saas-2026-trends-from-ai-experiments-to-production-ready-platforms

[4] Saas Integration Strategies 2026 – https://saasnovas.com/saas-integration-strategies-2026/

[5] Saas Trends – https://tridenstechnology.com/saas-trends/