Last updated: March 23, 2026

Quick Answer

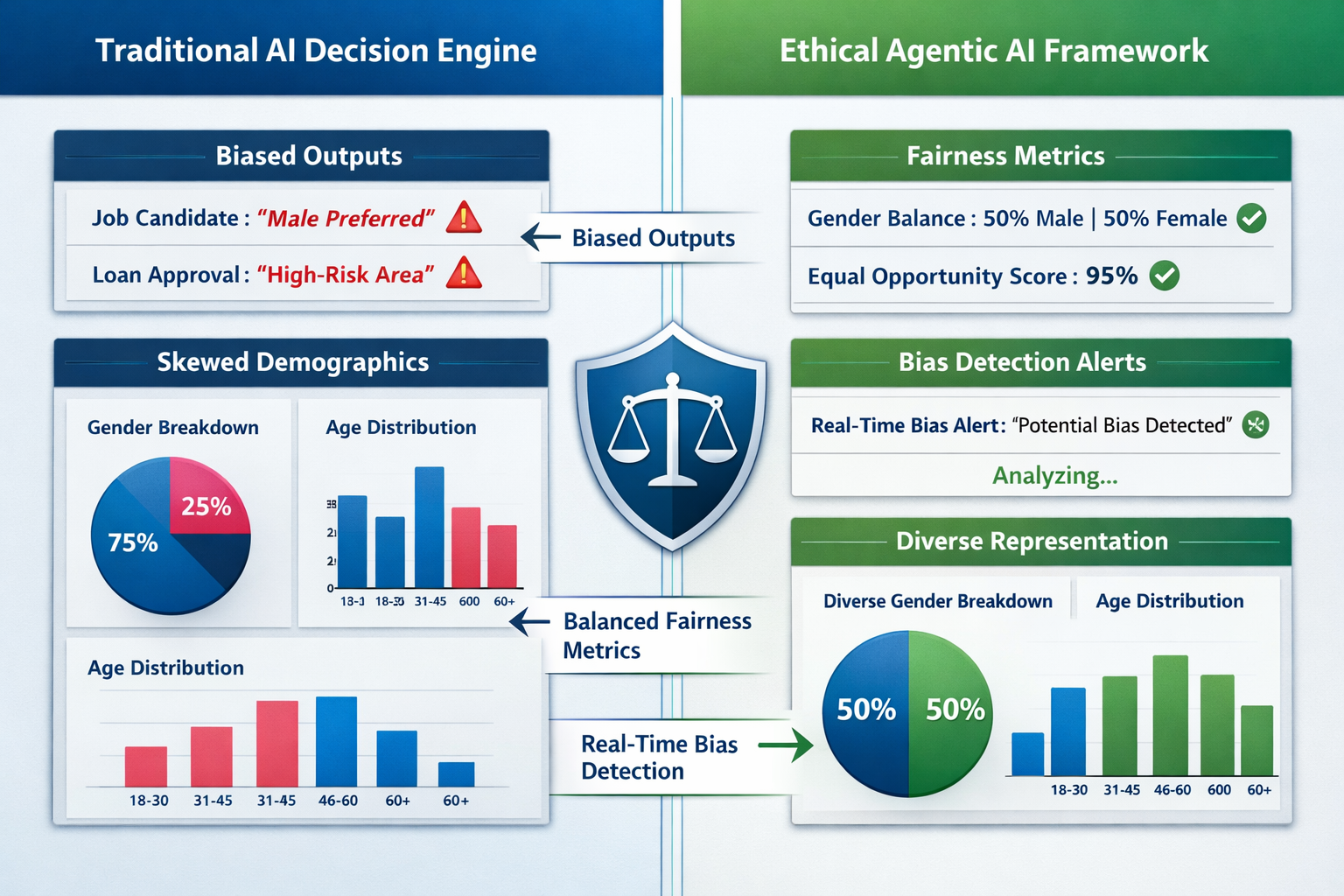

Ethical Agentic AI Frameworks for high-ticket B2B decision engines embed real-time bias detection auditors, fairness enforcement modules, and regulatory compliance monitors directly into autonomous AI workflows to prevent discriminatory outcomes and legal violations. These frameworks require continuous audit trails, human oversight mechanisms, and governance agents that independently verify other AI systems comply with EU AI Act mandates, ISO/IEC 42001 standards, and sector-specific regulations before executing decisions worth thousands or millions of dollars.

Key Takeaways

- Bias detection auditors now run continuously in production, simulating edge cases and demographic scenarios to catch discriminatory patterns before they affect real B2B transactions

- EU AI Act compliance mandates effective human oversight for high-risk AI systems, creating tension with agentic AI's autonomous design that organizations must resolve through structured governance

- Governance agents act as meta-layer controls that independently monitor other AI systems for policy violations, shifting governance from overhead to strategic enabler

- Human-in-the-loop vs. human-on-the-loop differentiation determines when autonomous agents require pre-approval (finance, healthcare) versus retrospective review (marketing, operations)

- ISO/IEC 42001 provides the management system framework organizations need to document AI oversight and demonstrate regulatory control

- Over 2,000 legal claims related to insufficient AI guardrails are anticipated in 2026, driving urgent implementation of stronger governance mechanisms[5]

- Cross-functional governance councils must codify ethical dilemmas into operational constraints, translating organizational values into agent logic

- Continuous compliance monitoring tracks regulatory changes across GDPR, AML, sanctions lists, and sector mandates, alerting on violations before escalation

- Audit trail requirements for all agent actions, clear approval workflows, and override mechanisms are now non-negotiable controls in enterprise deployments

- Ethics and transparency regarding data usage have become critical trust factors in high-ticket B2B sales, where automation must enhance rather than degrade buyer experience[4]

What Are Ethical Agentic AI Frameworks and Why Do They Matter for B2B Decision Engines?

Ethical Agentic AI Frameworks are governance architectures that embed bias detection, fairness enforcement, and regulatory compliance controls directly into autonomous AI systems making high-stakes business decisions. For B2B decision engines handling contracts worth $50,000 to millions—such as enterprise software pricing, credit approvals, or supply chain allocations—these frameworks prevent discriminatory outcomes, legal liability, and reputational damage.

Traditional AI systems operate with periodic audits and retrospective bias testing. Agentic AI frameworks flip this model by deploying dedicated bias auditors that continuously simulate edge cases and demographic scenarios against live production models[1]. These auditors detect statistically significant bias patterns in real time and generate explainability reports for regulators.

The stakes are particularly high in 2026 because:

- The EU AI Act is now fully enforceable, explicitly mandating "effective human oversight" for high-risk AI systems[2]

- Industry forecasts predict over 2,000 legal claims related to insufficient AI guardrails this year[5]

- High-ticket B2B buyers increasingly demand transparency about how AI systems use their data and make recommendations[4]

Common mistake: Organizations assume existing compliance frameworks for traditional software apply to agentic AI. They don't. Agentic systems make autonomous decisions across multiple interactions, creating compounding risk that requires specialized governance.

Choose this approach if: Your B2B decision engine handles regulated industries (finance, healthcare, government contracts), processes decisions above $50,000, or serves buyers in EU jurisdictions subject to the AI Act.

For foundational context on how AI systems integrate into broader marketing and business strategies, see our guide on AI marketing approaches.

How Do Bias Detection Mechanisms Work in Production Agentic AI Systems?

Bias detection in production agentic AI operates through continuous auditing agents that run parallel to primary decision engines, testing outputs against fairness metrics before execution. These auditors simulate diverse demographic scenarios, edge cases, and protected-class variations to identify statistically significant disparities in treatment or outcomes.

The architecture includes three core components:

1. Real-Time Scenario Simulators

Generate synthetic test cases representing protected classes (age, gender, ethnicity, disability status) and feed them through the decision engine alongside real requests. Compare outcomes across groups using statistical parity, equalized odds, and disparate impact ratios.

2. Anomaly Detection Algorithms

Monitor decision patterns for sudden shifts in approval rates, pricing recommendations, or resource allocations that correlate with demographic attributes. Flag deviations exceeding predefined thresholds (typically 80% rule for disparate impact).

3. Explainability Report Generators

Produce human-readable audit reports documenting which input features most influenced decisions, whether protected attributes leaked into recommendations through proxy variables, and which specific transactions triggered fairness violations.

Security agents provide a complementary control layer by detecting anomalous agentic AI behavior patterns that may indicate model drift, data poisoning, or adversarial manipulation[7].

Edge case to watch: Bias can emerge from seemingly neutral features. A B2B pricing engine that factors "company size" may inadvertently discriminate against minority-owned businesses that tend to be smaller, even without directly considering ownership demographics.

Implementation rule: For high-risk B2B decisions (credit, insurance, employment-related services), run bias audits on 100% of transactions. For medium-risk decisions (marketing personalization, content recommendations), sample at least 10% of daily volume with full weekly audits.

Organizations implementing these systems often benefit from understanding broader search engine optimization techniques to ensure their ethical AI practices are discoverable and transparent to stakeholders.

What Fairness Enforcement Standards Apply to High-Ticket B2B Decision Engines?

Fairness enforcement for high-ticket B2B decision engines must satisfy multiple regulatory frameworks simultaneously: EU AI Act requirements for high-risk systems, ISO/IEC 42001 management standards, GDPR data protection rules, and sector-specific mandates (financial services, healthcare, government procurement).

EU AI Act Compliance (High-Risk Classification)

B2B decision engines that determine access to essential services, credit, or employment opportunities fall under "high-risk" classification. Requirements include:

- Documented risk management systems

- High-quality training data with bias mitigation

- Technical documentation and audit trails

- Human oversight mechanisms

- Accuracy, robustness, and cybersecurity measures

ISO/IEC 42001 Management Framework

This international standard provides the structure to document AI oversight and demonstrate control to regulators[2]. Key elements:

- Defined roles and responsibilities for AI governance

- Risk assessment and treatment procedures

- Performance monitoring and continuous improvement

- Incident response and corrective action protocols

Sector-Specific Regulations

Financial services must additionally comply with anti-money laundering (AML) rules and sanctions screening. Healthcare decisions require HIPAA compliance. Government contractors face FAR/DFAR requirements.

Continuous compliance monitoring systems now track regulatory publications and cross-reference company transactions against current rules, proactively alerting on violations before escalation[1]. Enterprise solutions like Vanta simultaneously map controls across multiple frameworks to enable continuous compliance rather than periodic audits[1].

Decision rule: If your B2B decision engine operates in EU markets AND affects access to services/credit/employment AND processes decisions above €10,000, assume high-risk classification and implement full EU AI Act controls.

Common violation: Organizations implement bias detection but fail to document the governance processes around how detected biases are remediated, who approves corrective actions, and how effectiveness is verified—all required for regulatory compliance.

For teams managing multiple AI-powered systems, our automated email intent detection guide demonstrates how similar governance principles apply across different AI applications.

How Should Organizations Implement Governance Agents as Meta-Layer Controls?

Governance agents function as independent AI systems that monitor other agentic AI systems for policy violations, creating a meta-layer of automated oversight. This architecture shifts governance from manual compliance overhead to an intelligent enabler that allows higher-value autonomous deployments while maintaining control[7].

Implementation Architecture:

Primary Layer: Operational Agents

These are your B2B decision engines—pricing optimizers, contract analyzers, risk assessors, lead scoring systems. They operate with defined autonomy boundaries and decision authority limits.

Meta Layer: Governance Agents

Independent agents that:

- Continuously review operational agent decisions against policy rules

- Detect pattern violations across multiple systems

- Escalate high-risk decisions to human review queues

- Generate compliance reports for audit trails

- Update operational constraints based on regulatory changes

Human Oversight Layer

Cross-functional governance councils that:

- Define ethical boundaries and policy rules

- Review escalated decisions requiring human judgment

- Approve governance agent policy updates

- Conduct periodic effectiveness assessments

Key advantage: Governance agents scale oversight without proportionally scaling human resources. One governance agent can monitor dozens of operational agents 24/7, catching violations that would slip through periodic manual audits.

Security agents complement governance agents by focusing on technical anomalies—unusual API calls, unexpected data access patterns, or model behavior drift—while governance agents enforce business policy and ethical rules[7].

Implementation sequence:

- Document existing policies (30-45 days): Codify ethical guidelines, regulatory requirements, and business rules into machine-readable policy sets

- Deploy governance agents (60-90 days): Implement monitoring layer with read-only access to operational agent logs and decision outputs

- Establish escalation workflows (30 days): Define human review triggers, approval authorities, and override mechanisms

- Enable enforcement (phased rollout): Gradually grant governance agents authority to block or modify operational agent decisions

Edge case: Governance agents themselves require oversight. Implement "governance of governance" through periodic human audits of governance agent decisions to prevent overly restrictive controls that block legitimate business operations.

Organizations building comprehensive AI strategies should also explore ChatGPT SEO applications to understand how governance principles extend to content generation and customer-facing AI systems.

What's the Difference Between Human-in-the-Loop and Human-on-the-Loop for B2B Decisions?

Human-in-the-loop (HITL) and human-on-the-loop (HOTL) represent fundamentally different oversight models that determine when autonomous agents require human approval versus retrospective review. The choice depends on decision risk, regulatory requirements, and operational velocity needs[2].

Human-in-the-Loop (High-Risk Decisions)

Definition: Agents analyze data and generate recommendations, but humans must approve before execution.

Required for:

- Financial credit decisions above $50,000

- Healthcare coverage determinations

- Employment-related assessments (hiring, promotion, termination)

- Government contract awards

- Legal contract terms negotiation

Workflow:

- Agent analyzes request and generates recommendation

- System presents recommendation with explainability report

- Human reviewer approves, modifies, or rejects

- Only approved actions execute

- Decision and rationale logged to audit trail

Regulatory driver: EU AI Act explicitly requires human oversight for high-risk systems, interpreted by most legal experts as mandating HITL for decisions affecting fundamental rights[2].

Human-on-the-Loop (Lower-Risk Decisions)

Definition: Agents execute decisions autonomously while humans monitor logs and can intervene or override retrospectively.

Appropriate for:

- Marketing personalization and content recommendations

- Inventory optimization and supply chain routing

- Customer service tier assignments

- Meeting scheduling and calendar management

- Standard contract renewals below risk thresholds

Workflow:

- Agent analyzes request and executes decision

- Decision logged to monitoring dashboard

- Humans review logs periodically (daily/weekly)

- Anomalies or patterns of concern trigger investigation

- Humans can override past decisions and update agent constraints

Operational advantage: HOTL enables true automation benefits—24/7 operation, sub-second response times, and massive scale—while maintaining accountability through comprehensive audit trails.

Decision matrix:

| Decision Type | Financial Threshold | Regulatory Impact | Oversight Model |

|---|---|---|---|

| Enterprise software pricing | >$50,000 | Potential discrimination | HITL |

| Credit/financing approval | >$10,000 | Financial regulation | HITL |

| Marketing email personalization | Any | Low | HOTL |

| Contract renewal (standard terms) | <$25,000 | Low | HOTL |

| Healthcare coverage determination | Any | HIPAA, patient rights | HITL |

| Lead scoring/prioritization | Any | Low | HOTL |

Common mistake: Organizations implement HOTL for high-risk decisions to achieve automation efficiency, then face regulatory violations when audits reveal insufficient oversight. The 2,000+ anticipated legal claims in 2026 largely stem from this miscalculation[5].

Best practice: Start with HITL for all decisions, collect data on false positive rates (unnecessary human reviews), then gradually migrate lower-risk categories to HOTL based on demonstrated safety records.

Teams building AI-powered workflows can reference our social post generation guide for examples of appropriate HOTL applications in marketing contexts.

How Do Cross-Functional Governance Councils Operationalize Ethical AI Frameworks?

Cross-functional governance councils translate abstract ethical principles into concrete operational constraints that agentic AI systems can enforce. These councils bring together legal, ethics, IT, operations, compliance, and executive stakeholders to codify organizational values into agent logic[2].

Core Responsibilities:

1. Policy Development

Define "rules of engagement" for agentic systems:

- Which decisions require human approval (HITL vs. HOTL thresholds)

- Protected attributes that must never influence decisions

- Fairness metrics and acceptable tolerance ranges

- Escalation triggers for edge cases

- Override authorities and emergency stop procedures

2. Ethical Dilemma Resolution

Address conflicts between competing values:

- Efficiency vs. fairness (when bias detection slows decisions)

- Transparency vs. competitive advantage (explainability of proprietary models)

- Autonomy vs. control (how much independence to grant agents)

- Innovation vs. risk (when to deploy experimental capabilities)

3. Incident Response

When bias violations or policy breaches occur:

- Investigate root causes (data quality, model drift, policy gaps)

- Determine remediation for affected parties

- Update agent constraints to prevent recurrence

- Document lessons learned for regulatory audits

4. Continuous Improvement

Quarterly reviews of:

- Agent performance against fairness metrics

- Human override rates and reasons

- Regulatory landscape changes

- Stakeholder feedback and concerns

Composition Best Practices:

- Legal counsel (employment law, regulatory compliance): Interprets regulatory requirements and assesses legal risk

- Ethics officer or external ethicist: Provides moral framework and stakeholder perspective

- Chief AI Officer or senior data scientist: Explains technical capabilities and limitations

- Operations leaders: Represents business efficiency and customer experience needs

- Compliance officer: Ensures documentation meets audit standards

- Executive sponsor (C-level): Provides decision authority and resource allocation

Meeting cadence:

- Weekly during initial deployment (first 90 days)

- Biweekly during stabilization (months 4-12)

- Monthly for mature systems with quarterly deep reviews

Decision-making authority:

Councils should have explicit authority to:

- Pause or roll back agent deployments showing bias patterns

- Approve new decision categories for agent autonomy

- Allocate budget for remediation and system improvements

- Escalate systemic issues to board level

Documentation requirements:

ISO/IEC 42001 and EU AI Act compliance demand written records of:

- Policy decisions and rationale

- Risk assessments and mitigation plans

- Incident investigations and corrective actions

- Performance monitoring results

- Stakeholder consultation processes

Common failure mode: Councils become "rubber stamp" bodies that approve IT recommendations without substantive ethical analysis. Prevent this by requiring ethics officers or external members to formally sign off on high-risk deployments.

Success metric: Council effectiveness should be measured by reduction in bias incidents, improved fairness metrics, faster regulatory audit completion, and increased stakeholder trust scores—not by number of meetings held.

Organizations building governance structures can learn from affiliate marketing program management approaches, which similarly require balancing automation with ethical oversight.

What Audit Trail and Documentation Standards Must Ethical Agentic AI Systems Maintain?

Audit trails for ethical agentic AI systems must capture complete decision provenance—every input, intermediate reasoning step, final output, and human intervention—with sufficient detail to reconstruct decisions months or years later for regulatory investigations, legal discovery, or bias audits[2].

Mandatory Audit Trail Components:

1. Input Data Logging

- Complete request parameters and context

- User/customer identifiers (with GDPR-compliant access controls)

- Timestamp and system state at decision time

- Data source attribution for all features used

2. Model Inference Records

- Model version and training data lineage

- Feature importance scores for the specific decision

- Confidence levels and uncertainty estimates

- Alternative recommendations considered but not selected

3. Policy Compliance Checks

- Which governance rules were evaluated

- Bias detection audit results (pass/fail for each fairness metric)

- Regulatory compliance verification (EU AI Act, GDPR, sector rules)

- Escalation triggers evaluated and outcomes

4. Human Oversight Actions

- HITL approval/rejection decisions with reviewer identity

- Rationale for human overrides of agent recommendations

- Time spent in human review queue

- Override patterns tracked for training data

5. Outcome Tracking

- Final decision executed

- Customer/stakeholder acceptance or appeal

- Business results (contract value, conversion, retention)

- Fairness impact assessment (demographic distribution of outcomes)

Retention Requirements:

| Record Type | Retention Period | Regulatory Driver |

|---|---|---|

| High-risk decision logs | 7-10 years | EU AI Act, financial regulations |

| Bias audit reports | 5 years minimum | Discrimination law statutes of limitations |

| Model training data lineage | Life of model + 3 years | AI Act technical documentation |

| Human override rationales | 5 years | Employment and civil rights law |

| Incident investigation reports | 10 years | Corporate governance standards |

Access Controls:

Audit logs contain sensitive data requiring role-based access:

- Compliance officers: Full read access for audits

- Data scientists: Anonymized access for model improvement

- Legal counsel: Full access during investigations

- Regulators: Controlled access during examinations

- Data subjects: Right to access their own decision records (GDPR Article 15)

Tamper-Proof Storage:

Use append-only logging systems with cryptographic hashing to prevent retroactive modification. Blockchain or distributed ledger technologies provide verifiable immutability for high-stakes applications.

Common compliance gap: Organizations log decisions but fail to capture the reasoning behind them—which specific features influenced the outcome, why alternatives were rejected, and how fairness checks passed. Regulators increasingly demand explainability, not just decision records.

Implementation checklist:

- Structured logging format (JSON/XML) for machine parsing

- Automated log integrity verification (daily hash checks)

- Separate storage from production systems (prevent operational access)

- Encryption at rest and in transit

- Documented data retention and destruction procedures

- Regular audit log completeness testing (quarterly)

- Incident response procedures for log gaps or tampering

- GDPR-compliant data subject access request (DSAR) workflows

Performance consideration: Comprehensive logging adds 5-15% overhead to decision latency. For high-volume systems, implement asynchronous logging where decision execution proceeds while audit records write to separate storage.

Best practice: Conduct quarterly "mock audits" where internal compliance teams request random decision reconstructions. Verify that audit trails provide sufficient detail to satisfy regulatory standards before actual examinations occur.

Teams managing complex data workflows should also review SEO fundamentals to understand how transparency and documentation principles apply across digital strategies.

What Are the Emerging Governance Standards and Frameworks for 2026?

The 2026 governance landscape for ethical agentic AI centers on three converging standards: the fully enforceable EU AI Act, ISO/IEC 42001 management systems, and emerging industry-specific frameworks that translate regulatory requirements into operational controls[8].

EU AI Act (Full Enforcement)

The Act categorizes AI systems by risk level and imposes progressively stricter requirements:

Prohibited Practices:

- Social scoring by governments

- Exploitation of vulnerable groups

- Subliminal manipulation

- Real-time biometric identification (with exceptions)

High-Risk Systems (most B2B decision engines fall here):

- Mandatory conformity assessments before deployment

- Fundamental rights impact assessments

- Post-market monitoring and incident reporting

- CE marking requirements

- Penalties up to €35 million or 7% of global revenue

Transparency Requirements:

- Disclosure when interacting with AI systems

- Explainability of decisions affecting individuals

- Human oversight mechanisms

ISO/IEC 42001: AI Management System

This international standard provides the operational framework to demonstrate EU AI Act compliance[2]:

- Context establishment: Define organizational AI landscape and stakeholder needs

- Leadership and governance: Assign accountability and allocate resources

- Planning: Conduct risk assessments and set objectives

- Support: Ensure competence, awareness, and documentation

- Operation: Implement controls for AI lifecycle management

- Performance evaluation: Monitor, measure, audit, and review

- Improvement: Address nonconformities and continuously enhance

Industry-Specific Frameworks:

Financial Services:

- NIST AI Risk Management Framework adaptation

- Basel Committee guidance on AI/ML risk management

- OCC Model Risk Management standards (SR 11-7)

Healthcare:

- FDA Software as Medical Device (SaMD) framework

- HIPAA Security Rule AI-specific guidance

- Clinical validation requirements for diagnostic AI

Government Contracting:

- FedRAMP AI security controls

- NIST AI 100-1 foundational standards

- DoD Responsible AI guidelines

Multi-Framework Compliance Mapping:

Leading organizations use automated compliance platforms that simultaneously map controls across frameworks[1]. For example, a single "bias detection audit" control satisfies:

- EU AI Act Article 10 (data governance)

- ISO/IEC 42001 Section 6.1 (risk assessment)

- NIST AI RMF MEASURE function

- Sector-specific fairness requirements

2026 Trends to Watch:

1. Governance Agents as Compliance Enablers

Shift from viewing governance as constraint to strategic differentiator. Organizations with mature governance agents can deploy agentic AI faster and more safely than competitors[7].

2. Continuous Compliance Over Point-in-Time Audits

Real-time monitoring systems that track regulatory changes and automatically update agent constraints replace annual compliance reviews[1].

3. Federated Governance Models

Large enterprises implement hub-and-spoke governance where central councils set policy but business units maintain operational oversight tailored to their risk profiles.

4. Third-Party Governance Auditors

Independent firms offering "governance-as-a-service" emerge to provide objective assessments and regulatory liaison for organizations lacking internal expertise.

Implementation priority matrix:

| Framework | Implementation Timeline | Mandatory vs. Voluntary | Penalties for Non-Compliance |

|---|---|---|---|

| EU AI Act | Immediate (2026) | Mandatory (EU operations) | Up to €35M or 7% revenue |

| ISO/IEC 42001 | 12-18 months | Voluntary (certification) | Competitive disadvantage |

| NIST AI RMF | 6-12 months | Voluntary (US best practice) | Reputational risk |

| Sector-specific | Varies by industry | Often mandatory | Regulatory sanctions |

Quick start recommendation: Begin with ISO/IEC 42001 implementation, which provides the management system structure to satisfy EU AI Act requirements and adapt to sector-specific frameworks as they mature.

Organizations navigating complex regulatory environments can apply similar strategic thinking from digital marketing strategy frameworks to governance implementation.

Frequently Asked Questions

What makes a B2B decision engine "high-risk" under the EU AI Act?

A B2B decision engine is high-risk if it determines access to essential services (credit, insurance, utilities), influences employment decisions (hiring, promotion, termination), or affects legal rights. Financial thresholds don't define risk—impact on fundamental rights does. If your engine could deny someone a loan, job opportunity, or essential service, it's high-risk regardless of transaction size.

How often should bias audits run in production agentic AI systems?

For high-risk B2B decisions, run bias audits on 100% of transactions in real time before execution. For medium-risk decisions, sample at least 10% of daily volume with comprehensive weekly audits. Low-risk systems (marketing personalization) can use monthly audits with anomaly detection alerts for unusual patterns between scheduled reviews.

Can governance agents fully replace human oversight?

No. Governance agents scale oversight and catch policy violations faster than humans, but the EU AI Act explicitly requires "effective human oversight" for high-risk systems. Governance agents handle routine monitoring and escalate edge cases, ethical dilemmas, and high-stakes decisions to human councils for judgment. Think of them as force multipliers, not replacements.

What's the typical cost to implement ethical agentic AI frameworks?

Initial implementation ranges from $250,000 to $2 million depending on system complexity, data volume, and regulatory scope. Costs include governance platform licensing ($50K-$300K annually), consulting for policy development ($100K-$500K), technical integration (6-12 engineer-months), and ongoing monitoring infrastructure. Organizations facing potential €35M penalties view this as risk mitigation, not pure cost.

How do audit trail requirements affect system performance?

Comprehensive logging adds 5-15% latency overhead to individual decisions. For high-volume systems processing thousands of decisions per minute, implement asynchronous logging where decision execution proceeds while audit records write to separate storage. Modern distributed logging systems (Kafka, Kinesis) handle millions of events per second with minimal performance impact.

What happens if bias is detected after decisions have been executed?

Governance frameworks must include incident response procedures: (1) immediately pause the affected decision category, (2) investigate root cause through audit trail analysis, (3) identify and notify affected parties, (4) determine appropriate remediation (decision reversal, compensation, corrective action), (5) update agent constraints to prevent recurrence, and (6) document the incident for regulatory reporting. The EU AI Act requires post-market monitoring and incident reporting for high-risk systems.

Do small B2B SaaS companies need the same governance as enterprises?

Regulatory requirements don't scale by company size—they scale by risk level. A startup's pricing algorithm that affects access to essential services faces the same EU AI Act requirements as an enterprise system. However, implementation can be proportionate: smaller companies may use commercial governance platforms rather than building custom solutions, and simpler approval workflows rather than large cross-functional councils.

How should organizations handle conflicts between efficiency and fairness?

Cross-functional governance councils must explicitly prioritize fairness over efficiency for high-risk decisions where regulatory requirements apply. For lower-risk decisions, councils can define acceptable trade-offs—for example, allowing 5% efficiency loss to achieve 95% fairness metric compliance. Document these trade-off decisions in governance policies to demonstrate deliberate ethical consideration during audits.

Can agentic AI systems self-correct bias without human intervention?

Current technology allows agents to detect bias and pause decisions for human review, but self-correction without oversight creates accountability gaps. Best practice: governance agents can automatically apply predefined corrections (like adjusting thresholds to achieve statistical parity) for low-risk decisions, but high-risk bias remediation requires human council approval to ensure corrections don't introduce new problems.

What's the difference between explainability and audit trails?

Audit trails document what happened—complete decision records, inputs, outputs, and timing. Explainability describes why it happened—which features influenced the decision, how the model weighted different factors, and why alternatives were rejected. Both are required: audit trails satisfy documentation requirements, while explainability enables bias detection and human oversight. The EU AI Act requires both for high-risk systems.

How do governance frameworks handle multi-jurisdictional compliance?

Organizations operating globally need compliance mapping that identifies the strictest applicable standard for each decision type. For example, EU AI Act requirements apply to any decision affecting EU residents regardless of where the company is based. Implement governance frameworks that satisfy the most stringent applicable regulation (usually EU AI Act for B2B systems), which typically ensures compliance with less strict jurisdictions.

What metrics should executives track to monitor governance effectiveness?

Key governance metrics: (1) bias incident rate (target: <0.1% of decisions), (2) regulatory audit findings (target: zero material findings), (3) human override rate (indicates agent calibration), (4) time-to-remediation for detected issues (target: <24 hours for high-risk), (5) governance agent coverage (% of decisions monitored), (6) audit trail completeness (target: 100%), and (7) stakeholder trust scores from customer surveys. Present these monthly to executive leadership and quarterly to boards.

Conclusion

Ethical Agentic AI Frameworks for high-ticket B2B decision engines are no longer optional in 2026. With the EU AI Act fully enforceable, over 2,000 anticipated legal claims from insufficient guardrails, and buyer trust increasingly dependent on transparency, organizations must embed bias detection, fairness enforcement, and regulatory compliance directly into autonomous AI workflows.

The frameworks outlined here—continuous bias auditing, governance agents as meta-layer controls, human-in-the-loop vs. human-on-the-loop differentiation, cross-functional governance councils, comprehensive audit trails, and ISO/IEC 42001 management systems—provide the operational structure to deploy agentic AI safely and compliantly in high-stakes B2B contexts.

Actionable Next Steps:

- Conduct a risk assessment (Week 1-2): Classify your B2B decision engines by EU AI Act risk level and identify which require immediate governance implementation

- Establish a cross-functional governance council (Week 3-4): Assemble legal, ethics, IT, operations, compliance, and executive stakeholders with explicit decision authority

- Document existing policies (Month 2): Codify ethical guidelines, regulatory requirements, and business rules into machine-readable policy sets

- Implement audit trail infrastructure (Month 2-3): Deploy comprehensive logging systems that capture decision provenance for regulatory compliance

- Deploy bias detection auditors (Month 3-4): Integrate continuous fairness monitoring into production decision engines

- Launch governance agents (Month 4-5): Implement meta-layer monitoring with escalation workflows for policy violations

- Pursue ISO/IEC 42001 certification (Month 6-18): Formalize your AI management system to demonstrate regulatory control

- Conduct quarterly governance effectiveness reviews: Monitor bias metrics, audit findings, override rates, and stakeholder trust to drive continuous improvement

The organizations that treat ethical AI governance as a strategic differentiator rather than compliance overhead will deploy agentic systems faster, safer, and more profitably than competitors still viewing governance as constraint. In high-ticket B2B markets where a single biased decision can cost millions in lost contracts or regulatory penalties, ethical agentic AI frameworks are the foundation for sustainable autonomous operations.

For organizations building comprehensive AI strategies, explore our resources on AI marketing and digital strategy to understand how ethical frameworks integrate across business functions.

References

[1] Top 50 Agentic AI Implementations Use Cases To Learn From – https://8allocate.com/blog/top-50-agentic-ai-implementations-use-cases-to-learn-from/

[2] Agentic AI Governance – https://www.ewsolutions.com/agentic-ai-governance/

[4] B2B Sales In 2026 The Era Of Agentic AI – https://smart-team.io/en/b2b-sales-in-2026-the-era-of-agentic-ai/

[5] AI Agents B2B Marketing – https://thesmarketers.com/blogs/ai-agents-b2b-marketing/

[7] 7 Agentic AI Trends To Watch In 2026 – https://machinelearningmastery.com/7-agentic-ai-trends-to-watch-in-2026/

[8] Agentic AI Governance Frameworks 2026 Risks Oversight And Emerging Standards – https://hackernoon.com/agentic-ai-governance-frameworks-2026-risks-oversight-and-emerging-standards